Battle of the titans "780 ti Vs 290x" first results.

-

The thing with the RIV BE and the 4930K is decided; I know it's an old platform; but I want to tinker; and test for myself the performance difference between 4 and 6 cores. Haswell E has almost a year left; that's a lot of time :). Next year we will upgrade again if we can. Regarding the graphics; well, I don't know. I'll see. Thanks a lot guys. Best regards -

The RIV BE and 4930K thing is decided; I know it's an old platform; but I want to tinker; and test for myself the performance difference between 4 and 6 cores.

Haswell E still has almost a year left; that's a lot of time :). Next year we'll upgrade again if we can.

Regarding the graphics; well, I don't know. I'll see.

Thanks a lot guys.

Best regards

I wouldn't do it..X79 isn't worth it in terms of cost or performance, at least until X89 arrives.

If you really want to tinker, it's more worth it to get an Ivy or a Haswell despite their disaster of temps, as they perform better right now in games, it's a native 3.0 platform and above all, much cheaper.

As for being able to put filters on surround, either you get another gtx 780 or forget it...and you can't even put SSAA with 3...with an MSAA X4 and fluid it would already be quite a lot...

Best regards

-

I do believe it..it's the only way for Nvidia to once again price it at 1000€

http://videocardz.com/47530/nvidia-geforce-gtx-780-ti-also-special-edition

That card will be released at the end of December with the 6GB memory with a price increase of 175 dollars and that's as far as it will go, no further, they won't release a 12GB version as rumored. And the next thing will be the new series, time will tell

-

-

I'll finally get my hands on one tomorrow, but only for "tinkering"...

Again, for technical reasons beyond my control and my knowledge in RL, which is zero... my equipment is K.O.

Now, I'm going to set up a 4 WAY of TITAN from aupa... with the SLI master with 2 EVGA SC that will eat those TI with chips... Jotolillo knows this well...;)

And meanwhile, to avoid carrying the ipad and to entertain myself, a 780Ti arrives, which won't stay with me unless it's the 8th wonder of the world, which it's not.

I also say, although this is a warning to navigators, that I don't spend all day returning things or buying. But thanks to God, I've earned some reputation in this little world, which is unfair for many and I respect them, because for some big manufacturers and wholesalers it's just the opposite and they want me to try their new products.Es that's why many times, not always, I can have them before.De fact today I could have had the 780Ti, but as I had other more important things to do like attending to my work and my family, I left it for tomorrow.

In principle it's an analysis at an internal level, but I don't mind saying how it goes, however I see that many people have already asked for it..which is a bit incredible because they don't give them away precisely, but it seems that good products sell well despite the high markup, so you're going to find many things firsthand tomorrow.

Best regards.

P.D.Jotole!!! I want my 4 WAY DE TITAN A 1350MHZ!!! I'm going with the metal detector of the laptop and the ipad...;)

-

I'll finally get my hands on one tomorrow, but only for "tinkering"…

Again, for technical reasons beyond my will and wisdom in RL, I refer, which is null..my equipment is K.O.

Now, I'm going to set up a 4 WAY of TITAN from aupa...with the SLI master with 2 EVGA SC that will eat those TI with potatoes...Jotolillo knows this well...;)

And meanwhile, to not have to carry the ipad and to entertain myself, I get a 780Ti, which won't stay with me unless it's the 8th wonder of the world, which it's not.

I also say, although this is a warning to navigators, that I don't spend all day returning things or buying. But thanks to God, I've earned some reputation in this little world, which is unfair for many and I respect them, because for some big manufacturers and wholesalers it's just the opposite and they want me to try their new products.Es that's why many times, not always, I can have them before.De in fact today I could have had the 780Ti, but as I had other more important things to do like attending to my work and my family, I left it for tomorrow.

In principle it's an analysis at an internal level, but I don't mind saying how it goes, however I see that many people have already asked for it..which is a bit incredible because they don't give them away precisely, but it seems that good products sell well despite the high markup, so you will find many things firsthand tomorrow.

Best regards.

P.D.Jotole!!! I want my 4 WAY DE TITAN A 1350MHZ!!! I'm going with the treasure hunter of the laptop and the ipad...;)

If you can't stay still even a little, jajaja… :facepalm:

Let's see what impressions the GTX 780 Ti leaves you, and be fair with it, I know it's not going to be a significant change compared to your battery of Titan, but evaluate it in its "smallness" of loneliness and its 3 GB of VRAM, how it fights and how well it bites. Especially how it revs up, to see the potential of the B1 stepping.

Regards.

-

P.D.Jotole!!! quiero mi 4 WAY DE TITAN A 1350MHZ!!! que voy con el buscaminas del portátil y del ipad…;)

Jajajaaa, se hará lo que se pueda. ;). Pero te veo en 1400 :ugly:

Salu2…

-

¡Esta publicación está eliminada! -

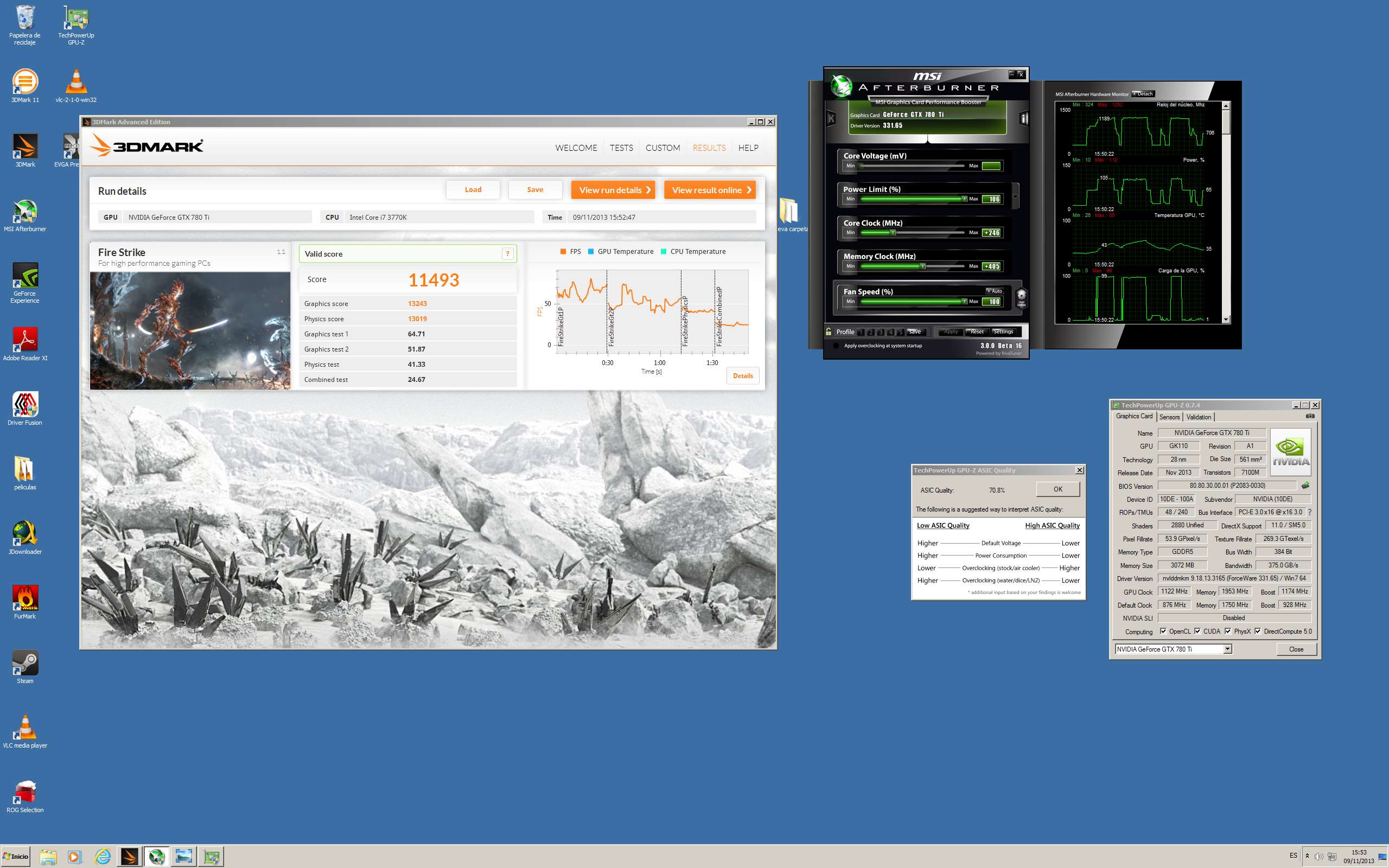

Well, I've already screwed it up.

As always, ASICs and my luck don't go together... even though it's supposed to be a new revision and all... the ASIC of this Gigabyte is only 70%... basically this means it's useless for OC unless you put tons of voltage and here's where the curious thing comes in... Nvidia has incredibly unlocked the voltage of these 780Ti... up to 1.3V... but it's absolutely useless since it hasn't increased their TPD. So one thing cancels out the other and if you do a lot of OC or put a lot of voltage in, it goes into a spectacular Throttling. Besides, putting memos at 7GHz makes the consumption skyrocket in a very pronounced way. It spends a little more than the TITAN.

The graphics card is a real gem, fresh, with terrible potential, but that's potential... because right now there's no justification for owners of TITAN or 780 and less with unlocked bios and voltage mods to get this Ti.

A TITAN in these conditions, matching clocks performs even better than the Ti. Which logically is not normal despite, in my judgment, being at most 5-7% of theoretical performance.

When bios come out that allow it to consume what it really spends with OCs, we'll see a splendid graphics card... right now it's a card "attacked" by default to stay first in bench performance united to the very good finishes and sound qualities and temperature of these already known GK110 reference. But that's it... I expected more, honestly...

However, it's also true that where the frequency drop is more pronounced is in synthetics that consume a lot. In games there should be no problem in maintaining about 1200MHZ with stock voltage and temps and sound of laughter, which keeps it unbeatable... but I want more... so we'll have to wait for custom bios to really see the performance of this card squeezed.

Meanwhile... I'll stick with my TITAN...;)

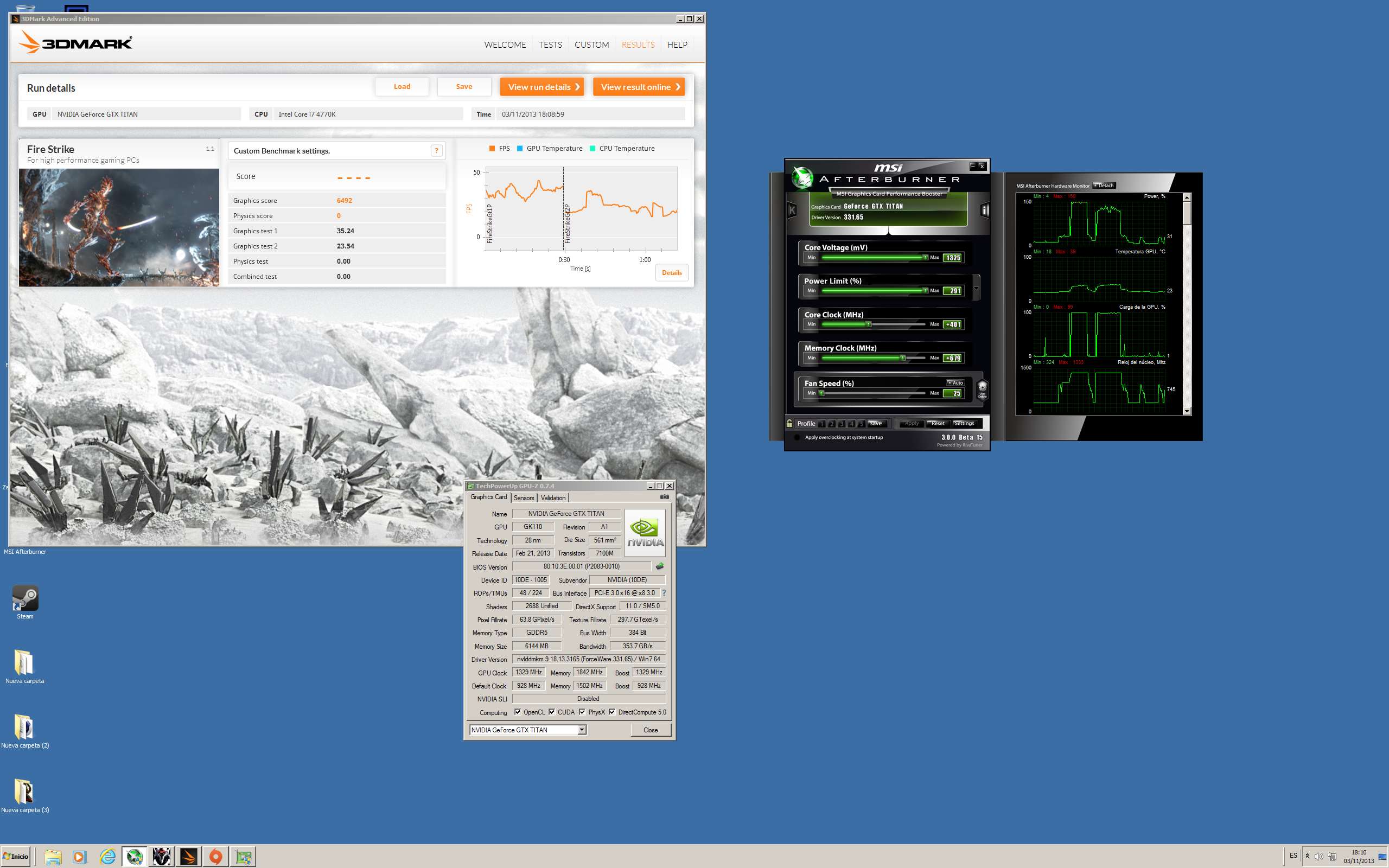

Here's an example.

I've been looking for 1250MHZ... and as you can see, the Power Target reached a maximum of 112 when the card only has 106, this has led to brutal drops down to 1189MHZ... however, although it has practically not been at 1250MHZ for any length of time, I would say around 1230MHZ... the graphics result is good. It should be remembered that I'm on Ivy which is a 4-core and will always lose in total by 2011.

But for the ASIC it has, with stock voltage, I didn't even bother to unlock it, and for the memos that are very attacked in frequency, I say again that it's an acceptable result.

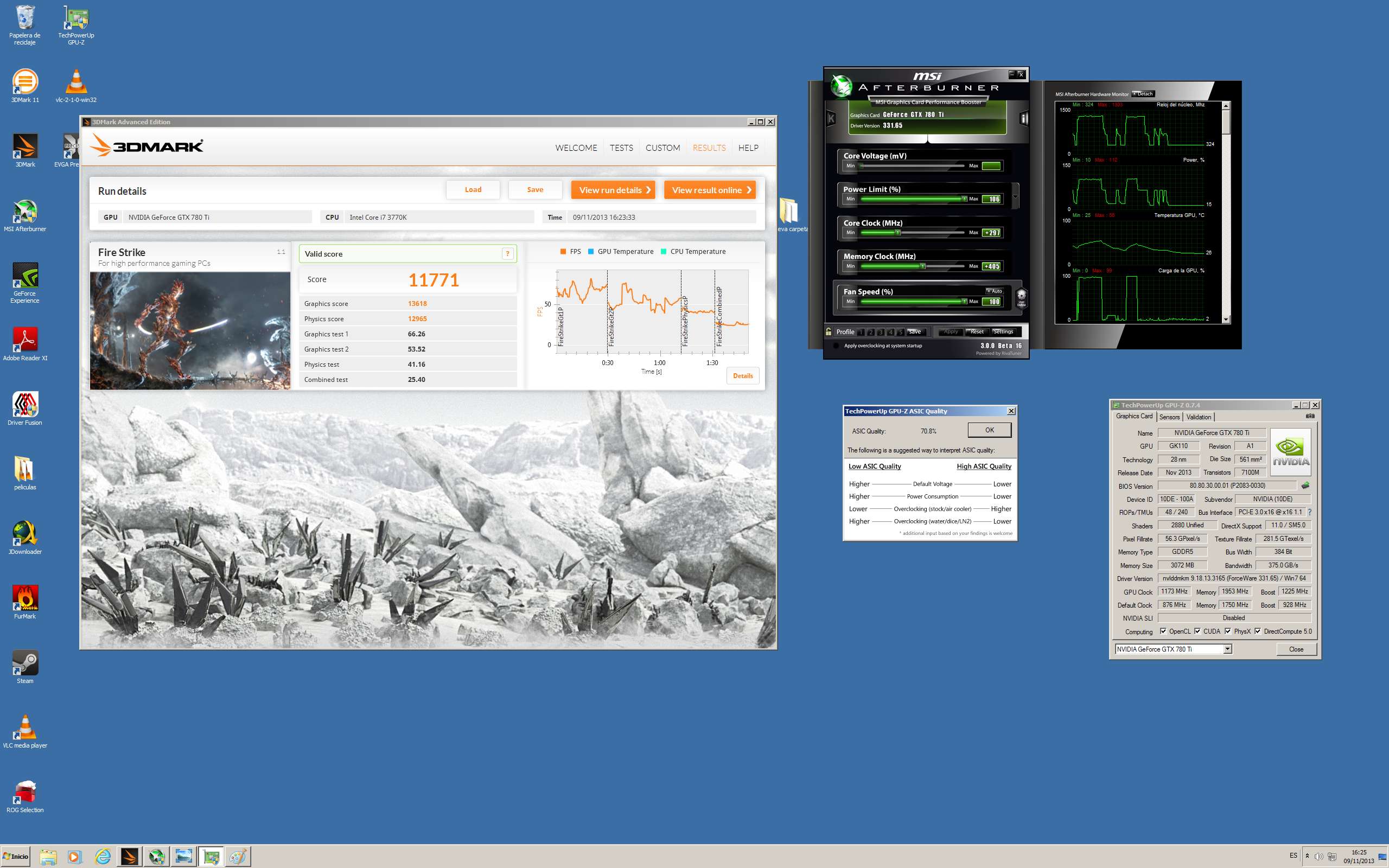

Well, now that I'm tickling it, I must say I'm impressed.

Although obviously it hasn't been at 1300MHZ or 3sg... it's true that the minimum has been 1221MHZ and so all the time above 1260MHZ... which is surprising since no voltage has been increased, only the core and yet it has had better minimums in MHZ than the previous time with less core... maybe we should sit down to understand how this new 780Ti works... it doesn't seem to be just a TPD problem... which it is, but there's something more... the card always drops the same regardless of the core or consumption it has at that moment... interesting...

The truth is that you have to surrender to the evidence... they pull a lot:

Here, despite dropping down to 1211MHZ... they're pulling the same as a TITAN at

1290MHZwith bios mod... and without any voltage on the 780Ti... by the way, Skynet has just released a bios mod...;DRegards.

-

What a failure the voltage unlock is, if in the end it has a consumption limit it's not worth anything, I also thought that the B1 revision would be a more refined process and better ASIC but it seems that it's going to be more of the same, perhaps if models with OC come out they should choose the best ASICs, to make sure they don't lower the frequency, that or let it eat more.saludos

-

What a failure the voltage unlock is, if it ends up having a consumption limit it's not worth anything, I also thought that the B1 revision would be a more refined process and better ASIC but it seems it's going to be more of the same, maybe if models with OC come out they should choose the best ASICs, to make sure they don't lower the frequency, that or let it eat more.

regards

It's an A1 like a cathedral...nothing at all about B1...there you have the GPU-Z..

Regards.

-

What a failure the voltage unlock was, if it ends up having a consumption limit it's not worth anything, I also thought that the B1 revision would be a more refined process and better ASIC but it looks like it's going to be more of the same, maybe if models with OC come out they should choose the best ASICs, to make sure they don't lower the frequency, that or let it eat more.

regards

The ASIC has nothing to do with the stepping, they are two totally distinct things and besides gpu-z is not known for making a correct reading of the ASIC quality in new models, less when there is a change in stepping.

It seems to me that you don't see many Titan/GTX780 that with stock voltage reach not only 1250 MHz (I have seen several reviews consistent with the data of ELP3 reaching between these and 1300 MHz peak with stock voltage), but 1200 MHz. I insist on the topic of "with stock voltage".

The ASIC itself gives us information, when read correctly, about the OC potential of a given chip but within what is a GIVEN STEPPING, it does not give us information about the capabilities between different steppings. Different chips and revisions, different ASIC behaviors. There is nothing to do with the ASIC of a GK110 A1 with the ASIC of a GK104 A2 (I believe the only one that exists), same thing.

We must understand that very slight revisions of a die give rise to subversions like A0, A1, A2, etc., and somewhat larger changes give a jump to the next letter, in the purest style of B0, B1, etc. In this case the difference is so important as to assign a new letter, so it is clear that it is not even a difference like the one between the GK104 A1 and the GK104 A2 (of which the first we did not even see in real products, but it is supposed that it already had a "commercial grade"). The game of ASIC quality is applied within the same chip with the same stepping, and it is what the BIOS use to determine in each case the maximum and real top of the Boost in each unit (it is the only real relationship seen throughout each model of the GK104 A2 with the reference BIOS, that the maximum boost was greater the better the ASIC, and also related but not so directly the maximum OC potential).

Obviously, in every stepping there must be products of better and worse quality, but besides the fact that GPU-z measures as it comes out of the pumpkin at least initially (my GTX 670 went from an +80% ASIC to something like 67% with subsequent gpu-z revisions :ugly:), the rules of the game only apply within "the family".

As an example, no matter how bad a G0 was (Q6600), it was rare that it did not surpass even the best B3 that it faced in OC, and within its stepping it could be of "the worst" and still... be in another world than the B3.

-

It's that it's an A1 like a cathedral…nothing of B1 at all...there you have the GPU-Z..

Best regards.

ELP3, it seems unbelievable that you take gpu-z seriously, you know very well that those data of stepping, die size and number of transistors are taken from internal databases in the program itself, not because they "detect" them, as always a later and revised version of gpu-z will be needed for it to "detect" (which it doesn't) features like stepping (it's really incapable of doing so, it relies on databases). The ASIC possibly does "detect" it through some low-level functionality of the graphics that delivers a numerical value representing it, but given the situation, gpu-z has to interpret it and put it on a correct scale for each new model that comes out (not manufacturer model, but gpu/variant). That's why this value changes (scale readjustment according to what they think the "real" ASIC is per revision of cases) between versions of gpu-z in new graphics. But the issue of the info on the first tab of gpu-z… but it doesn't even show you the real info of the maximum boost of a unit, it only shows you the official boost value of the model (and you can induce the maximum boost through the ASIC and the boost tables, but it seems that it's something too complex for w1zzard to integrate this interpretation of the real boost value).

-

It's that it's an A1 like a cathedral…nothing of a B1...there you have the GPU-Z..

Best regards.

If I had already seen it now, what a mistake, anyway there is already some mod bios in case you are interested.

[Official] NVIDIA GTX 780 Ti Owner's Club

I don't know why the rumors said B1 anyway maybe there isn't much difference.

I see that you had already noticed the vbios, it seems that you are getting the hang of that Ti and of course I thought that they should have more muscle than a Titan not much but something more.

Best regards.

-

If I had already seen it now, what a mistake, anyway there are already some mod bios in case you're interested.

[Official] NVIDIA GTX 780 Ti Owner's Club

I don't know why the rumors said B1 anyway maybe there isn't much difference.

I see that you had already noticed the vbios, it seems you're getting the hang of that Ti and of course I thought that they should have more muscle than a Titan, not much but something more.

regards

I see that the issue of gpuz showing a data point in the information sheet generates more confusion than anything else, from the same people who make the software, we get this by looking in their databases:

GTX 780 Ti info:

http://www.techpowerup.com/gpudb/2512/geforce-gtx-780-ti.html

GTX 780 info:

http://www.techpowerup.com/gpudb/1701/geforce-gtx-780.html

We can check that they are still talking and in fact it is shown in photos that they carry different steppings:

GTX 780 "classic":

GTX 780 Ti:

In both dies we see two silkscreens of different origins, one more complete and of lower intensity that is surely the silkscreen of production at TSMC, and another simpler and clearer that must be that of nvidia.

The first silkscreen tells us the place of manufacture followed by a numerical code, as in the case of the GTX 780 Ti, it puts 1334B1, this code can be decomposed into three data, which if I'm not mistaken are the year of manufacture (13), production week (the 34th of the year) and chip stepping (B1).

In the case of the "normal" GTX 780 we see that the code is 1308A1, that is, chip manufactured in 2013, in the eighth week of the year, and with an A1 stepping of the same.

After this data, there is in the next line a series of codes that I no longer know what they mean, but it seems that they do not add more information about the topic we are dealing with. What is seen is that the second silkscreen, the one from nvidia, introduces in a more "simple" way an explanation about the chip and the model of the card where it will be mounted (and from there I deduce that it is a silkscreen from nvidia, since it already happens in the cpu binning and classification as a source for such and such a card to be manufactured).

In the case of a GTX 780, the example, it puts chip-model-stepping in the silkscreen, that is, GK110-300-A1, where the GK110 obviously says what chip it is, the 300 identifies that this GK110 is oriented towards the GTX 780 (identifying both the type of binning to which it has been subjected and "capped" by laser) and finally the stepping.

In both cases it says that the Ti are "B1", so there is no doubt that it is not the same stepping, except that both nvidia and TSMC have changed the way they mark their chips produced spontaneously.

Guys, until a user is seen disassembling a Ti and finds an "A1" on top of the chip, the issue of gpu-z does not indicate anything except that it does not detect the different stepping, but nothing more. It wouldn't be the first time. You can check the search engine in the TPU database! and you can check that there is a GTX 780 with B1 chips (could it be the GHz edition? it doesn't matter much, more than likely the normal GTX 780 will also end up having B1 chips although right now there is saturation of A1 steppings in the market and there is no certainty of getting the new one). The Ti in theory ensures us the new chip, there is no change in functionality, but I think it is becoming very evident that it is slightly more energy-efficient and in OCs (it goes up a little more, and all that extra hard it has seems not to repercuss much in increasing consumptions).

-

If I had already seen that they should be B1, but since they all say A1 it will be a mistake of the GPU-Z or we will have to wait for someone to remove the heatsink, it seems that it is not the same as the previous GK110 since it does not even allow you to make a copy of the bios, the easiest thing is that they have to update the GPU-Z so that it recognizes it well.

regards

-

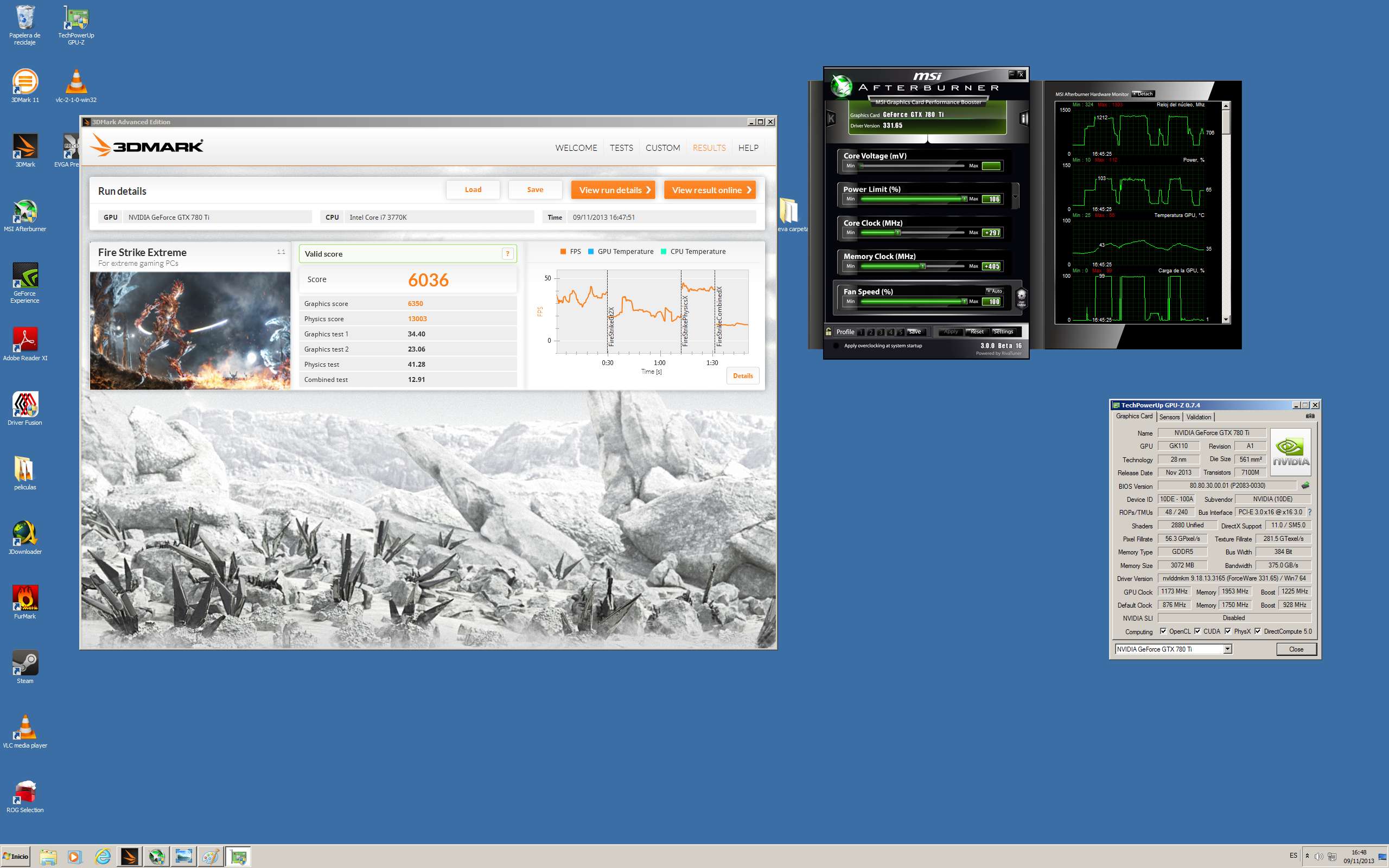

With bios mod and at 1.21V and maintaining stable frequencies

Interesting..although not shocking…I'll comment on a few things later.I'll comment, this is one of my TITANs at 1329MHZ..there is indeed 18MHZ between them, but in this test it's more decisive to have more bandwidth than 18MHZ of core, and yet the TITANs take the lead even if it's by a hair.The voltage used is more than enough since "it" is no longer there, my worst TITAN.

One of my good TITANs, it does that to you with 1.26-1.28V…that's a lot compared to the Ti.But what I compare is clock by clock, and with equal core and bandwidth, the TI doesn't pull more, on the contrary, it pulls less, a hair but less..something strange strange..

-

ALL the TIs are B1, no matter what GPUZ says, that is certain. The issue is that GPUZ will not read the new microcode properly, it only reads the header and incorrectly identifies the chip, but believe me, the ELPs are B1. Structurally they change and it is not a rehash as I have read around, but the changes in the ELPs are not so substantial to notice an excessive difference between these and the Titan. Initially the Vcore was unlocked since in the Titans, the sub-nucleus regulator controlled the total consumption of the card and depending on the fan's consumption, it lowered the power to the CPU as a self-regulating system. This series is different in that aspect, it prioritizes the GPU's Vcore regardless of the fan's, setting minimums so that throttling is hardly noticeable since Nvidia has listened to users and assemblers who fought for this. So even if the TDP does not go up, it allows for more power to the core. In fact, the BIOS are very, very different between these and the Titans. The TDP is parametrizable in this series

-

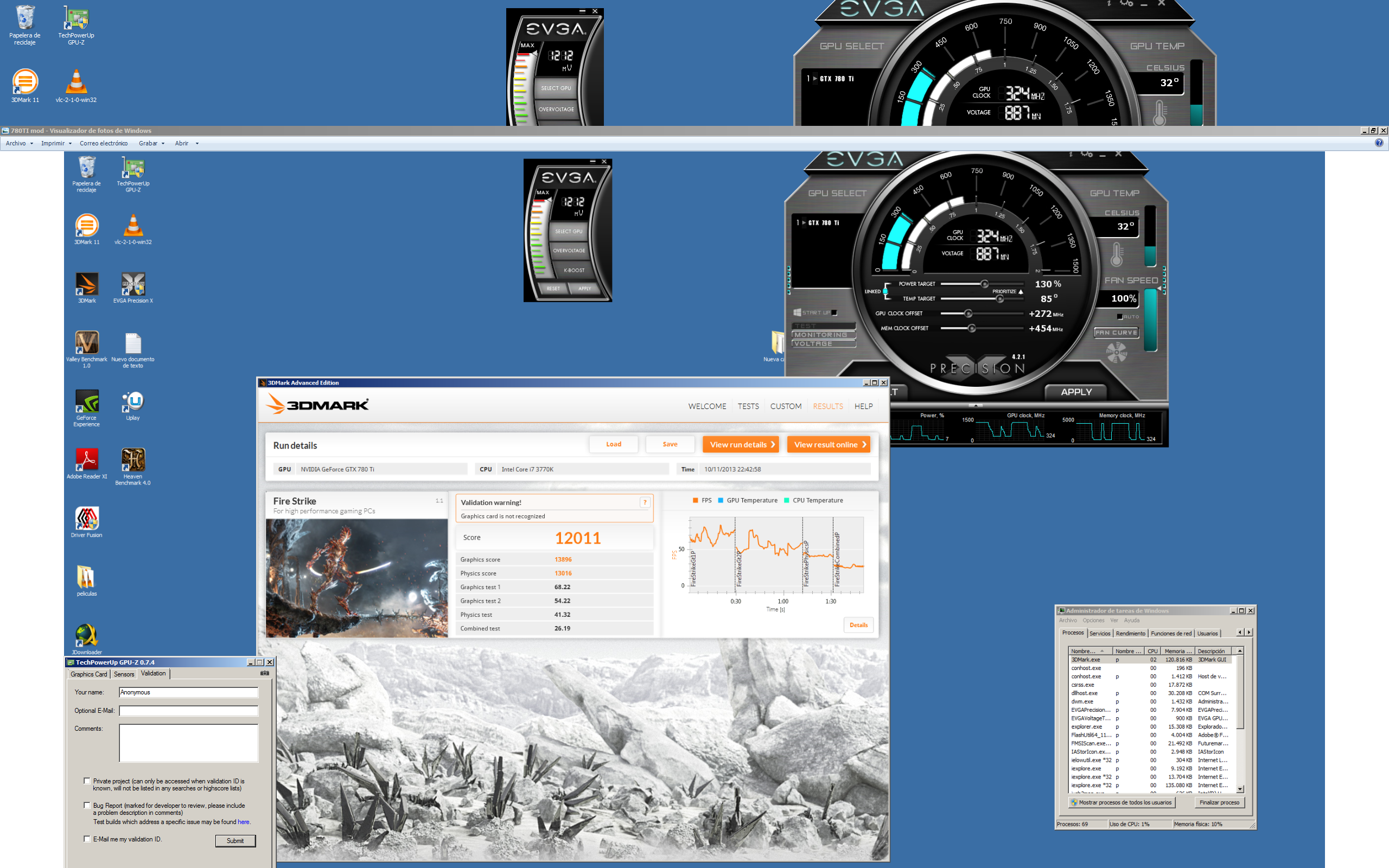

Well, let's break it down.

The graphics card seems to be voltage unlocked by default, but it's not really the case, or at least not on this S.O. that I have for testing and it's not clean. Because the R9s went through here, I tried the MSI voltage mod on the TITAN and on top of that I have it from downloads etc.. I mean, it's possible, I don't know, that the MSI, having it already altered to modify the TITANs, tells you that you can raise the voltage up to 1.30 but it's just an optical effect, because really with stock bios no voltage is applied with any progama.Ni MSI or EVGA. Moreover, if we add that the skynet mod bios only unlocks voltage up to 1.21V, the maximum allowed by Nvidia, the numbers add up. 1.21V if it applies.

Now let's go to the OC, it's enormous, to see how a card with an apparently so bad ASIC, is capable of doing 1300MHZ in the air with just 1.21V… and yes, they are definitely B1.. BUT, the reality is that those 1300MHZ are almost below the 1300MHZ TITAN. Something inexplicable since it's supposed to have more CC, more TMUS and more bandwidth. At least that's what I see in synthetics. Playing I feel it, but I can't give an opinion of a single card since at 1600p with one it doesn't measure up and I fit 4.Si if at least they were 2, I could say how it goes with respect to the TITAN SLI, but with just one, just like it happened to me with the R9s I can't comment because all framerate seems low to me.

However I can say that to be just one, it holds up very well against games at 1600p and there are no variations of boost up to 1200MHZ with stock bios.

I'm going to stick with my TITANs because right now they perform almost more at equal clocks, I don't like to blame the drivers for these things, because besides it doesn't make sense being the same architecture. But I can't think of anything else... something doesn't quite work.. this card at 1310MHZ should eat the TITAN and not the other way around as is happening to me.

Anyway.. let it have time who wants, I'm sure it will end up performing what it should within not much (if Nvidia hasn't played us with a future hypothetical but real, TITAN ULTRA). But since I already have that performance and I don't feel like waiting, I'll stick with my TITANs.

JOTOLE!! now more than ever finish those INVENCIBLE bitches with those wonderful ASICs.. hehe.. my girls... how much I miss them...:wall: (I curse the sticky gooey liquids)

Regards.

-

Well, let's break it down.

The graph seems to be voltage unlocked by default, but in reality it's not, or at least not on this S.O. that I have for testing and it's not clean. Because around here the R9s passed through, I experimented with the MSI voltage mod on the TITAN and on top of that I have it from downloads etc.. that is, it's possible, I don't know, that the MSI, having it already altered to modify the TITANs, tells you that you can raise the voltage up to 1.30 but it's just an optical effect, because really with stock bios no voltage is applied with any progama.Ni MSI nor EVGA. Moreover, if we add that the skynet mod bios only unlocks voltage up to 1.21V, the maximum allowed by Nvidia, the numbers add up. 1.21V if it applies.

Now let's go to the OC, it's enormous, to see how a card with an ASIC that seems so bad, is capable of doing 1300MHZ in the air with just 1.21V… and yes, they are B1 for sure.. BUT, the reality is that those 1300MHZ are below the 1300MHZ TITAN. Something inexplicable since it's supposed to have more CC, more TMUS and more bandwidth. At least that's what I see in synthetic benchmarks. When playing I feel it, but I can't give an opinion of a single card since at 1600p with one it doesn't measure up and I fit 4.Si if at least they were 2, I could say how it goes with respect to the TITAN SLI, but with just one, just like it happened to me with the R9s, I can't comment because I think all framerate is low.

However, I can say that for being just one, it holds up very well against games at 1600p and there are no variations in boost up to 1200MHZ with stock bios.

I'm going to stick with my TITANs because right now they perform better at equal clocks, I don't like to blame the drivers for these things, because besides, it doesn't make sense being the same architecture. But I can't think of anything else... something doesn't seem to be working right.. this card at 1310MHZ should eat the TITAN and not the other way around as is happening to me.

Anyway.. let it have time who wants, I'm sure it will end up performing what it should within not much (if Nvidia hasn't played us with a future hypothetical but real, TITAN ULTRA). But since I already have that performance and I don't feel like waiting, I'll keep going with my TITANs.

JOTOLE!! now more than ever finish those INVENCIBLE bitches with those wonderful ASICs..jeje..my girls...how much I miss them...:wall: (I curse the sticky gooey liquids)

Regards.

Hahaajajaa, they're ready already…........ and everything correct ;).

If the very commercial name indicates it, 780 TI, this one comes to give a turn to the 780, but Titan is a lot of Titan. I'm doing tests too, with the images I see of the Ti, and at equal clock, Titan is still above.

I know there's talk that it may be a driver issue, which is strange, since they have the same architecture and what improves in one will improve in the other. There's something we're missing but this TI doesn't come to cannibalize Titan.

If we're right the one that will hit again will be the Titan Ultra, whatever name they come out with. And of course another one that will come to occupy the price range of 1000 €.....

Salu2.....

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Registrarse Conectarse