Hardlimit test bank

-

@Yorus Well you already have it. The performance per W has been calculated in each test individually and the general scoring. I think in this aspect, the memory performance/W is quite irrelevant for the common mortals and the general result is distorted by this. So I would only look at tests #1, 2 and 3 depending on the use that will be given to the PC.

More rankings coming soon.

-

Thanks @cobito!. It will be very useful for future renewals in my equipment, since lately I tend to use the second-hand market more than new hardware.

-

-

Everything has already been migrated to version 3. You can see the details in the first post of this thread. Apart from the migration, the most important change is that the "Architectures" section has been added, where a list of all the architectures for which we have results appears. In general, there have been many changes. Surely the page with the most news compared to the previous version is the one of the comparisons, where additional and better organized information comes out.

From now on, we will gradually add the little things that are missing, mainly some rankings, additional information on the different pages and some descriptions.

Another major change will be announced soon that will come hand in hand with the signatures. But for that I will open a new thread.

-

What a great job.

Save all that with seven locks, because that database is worth its weight in gold.

It would be great if, once you enter and validate the data, the choice of style for the signature was automatic, I mean that instead of copying, opening your profile, editing it and pasting it into the signature, it would do it all at once.

Besides, if you choose the ranking, you're out of the game.

But what I'm getting at is, thanks for the hard work you're putting in.

-

Thanks @whoololon. Actually this works thanks to those who participate.

The thing about updating the signature automatically is something that gives me a bit of fear for security reasons.

Regarding the ranking, it is actually not intended as a signature but to share results around. That is, you pass the test bench, you want to show off results and instead of putting a boring link, you put a cool image.

-

Ah, so.

Thanks for the clarification.

For the next version, in the final message when collecting data, fix "Retriving data".

-

I have a question about some results. Let's see if anyone knows why this could happen.

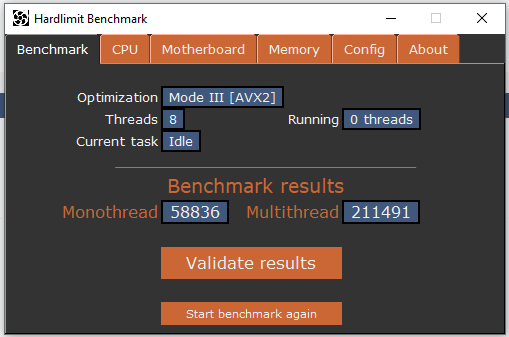

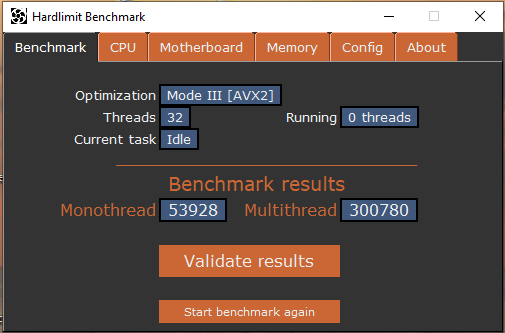

The other day, @krampak uploaded some results of the Ryzen 7 2700x. Specifically, I was intrigued by this one executed with 32 threads. This model has 8 cores with SMT. Looking at the results of a validation at 16 threads uploaded by the same @krampak on the same day, presumably from the same machine, the results look comparatively curious.

Leaving aside the memory tests, in the single-thread tests, the validation at 32 threads gets 1.2% more than the one at 16 threads. With that, we know that the conditions have been the same (same background loads) and we also know that both tests were run at stock frequency.

In contrast, in the multi-thread tests, the validation at 32 threads gets 5.3% more performance than the one at 16 threads. The way the benchmark works in multi-thread is very simple: it launches a process per thread, does its operations, calculates its scores and finally adds up the scores of all the processes. That is, if it got more points, it's because it was able to do more operations in the same time.

I can only think of two possibilities:

- That when executing 32 threads, the benchmark is monopolizing a greater percentage of the CPU, taking processing time away from background programs.

- That it is making better use of the processor's segmentation.

If I remember correctly, this behavior was also noticed by @kynes a while ago. A third possibility is a bug in the program, but after reviewing the code, I can't figure out how it could be happening because, in addition, the result of the integer test is practically the same in both cases; with a bug in the program, the behavior would have had to be reproduced in all the tests.

Anyway, I leave it there in case you feel like pondering for a while.

-

What I'm saying is that, with all the fuss about UserBenchmark, you need a serious site to compare processors... that's it.

-

As I mentioned in the other thread, the program is signed with a CA approved by Microsoft. So from now on, this becomes something serious.

The Windows Smartscreen still appears. The theory says that the program (but especially the certificate), have to gain reputation. There are three ways to gain reputation:

· Leave the executable in a public place. Over time, it will gain points. This is done.

· Download it from different sites. The more it is downloaded and executed, the more points it will gain. I read that if it is done from Internet Explorer or Edge it will be better. But in reality I think it makes little difference. The point is simply to download and run it (no need to pass it or validate). This is where you can lend a hand.

· Leaving the executable in any folder (like the downloads folder) so that the Windows Telemetry program can see it.In this way, in a matter of days, the warning message will disappear.

@whoololon said in Hardlimit test bench:

What I'm saying is that, with the fuss that's been made about the UserBenchmark, a serious site is needed to compare processors... that's all I'm saying.

Well yes, it may be a good time to move the matter. I have some ideas...

By the way, the typo in the text that you commented on has already been corrected.Regarding the central, there have been a couple of minor updates. The most important ones (the details in the first post of the thread):

· Now shows OC ranking in each fiche.

· Now shows the version in Spanish if a browser in Catalan, Galician, Basque, Asturian or Occitan is detected. -

I've been taking a look, and I'm not clear if the results shown, both in the micro description and in the ranking table, are the best scores (micro with OC up to the hilt in a specific configuration for tests like Xevipiu), or the average of all validated results for the micro, or only those that go with the serial frequency...Thanks in advance.

-

@whoololon Both in the micro description (cpu.php) and in the different processor and architecture rankings, only results without OC (stock frequency) are taken into account to make the average. The overclocked results appear in a separate table within the tab of each model (if there are overclocked results).

In the results of a validation (result.php), the data corresponds to the validation in question, without taking into account other validations. The user ranking table that appears both on the home page and in the validation result takes into account individual validations, including overclocked processors and without making averages.

In summary: the tabs and rankings calculate an average of the validations at stock frequency. If there are overclocked results for a model, they are shown in the tab separately. The user rankings show individual results (without average) including overclocked validations.

I don't know if that was what you were asking.

-

Yes, that was it; thanks for the clarification.

-

@cobito There is something that has me puzzled. The microphone of my laptop has 4 cores and 4 threads, but it gains about 25,000 points in multithreading if I set 8 threads instead of 4. I understand that it must be that it thus monopolizes more processor time, but then the results would not be totally consistent if the maximum number of threads possible are not used. Would there be any way to test more than 8 threads, to see what result it gives? If it is marginally superior or similar, it is a matter of micro usage. If it is very superior, it must be a bug in the benchmark.

-

@kynes First of all, there is a discrepancy between the program results and the central that is not corrected yet because I am thinking about how to give the most reliable result possible: the program uses the old method which consists of counting the maximum result of each test. In the central, an average of the 10 samples per test is made. In this way, from the program, greater variations between executions are appreciated while in the central, those differences (that can be caused by background processes) are filtered and are less appreciated.

Having said that, of the 4 results you have sent (2 with 4 threads and 2 with 8 threads), I have chosen the extremes to have the worst possible case: the one that gave the lowest score in 4 threads and the one that gave the highest score in 8 threads. The difference in the total multithread score is 4.7%. For reference, the differences between the two results at 4 threads and at 8 threads are 1.3% and 1.2% respectively.

To me, personally, that there is a 4.7% difference between the extremes vs that there is a 1.2% difference in validations with the same number of threads, it seems normal seeing how Windows 10 has a hundred things in the background.

But something that could be a failure of the program (and that would also be quite difficult to diagnose as to correct if it really were a failure), is the fact that in the tests with 8 threads, there is a peak of scores in the first sample. Surely because of that you have measured such a large difference in the program results where those peaks were taken at the same time as in the results of the central, that difference is much smaller, because the average was calculated.

I will see if I have a moment and prepare the version without thread limit that you mention so that you can test that.

-

@kynes Here is a modified version without a thread limit. In general, it seems that the multi-threaded result is proportional to the number of cores regardless of the excess threads, although there is a slight improvement when the number of threads is higher than the processor. But there is a machine where the thread synchronization has failed and has not been detected, generating a meaningless result. The PC has 4 cores with HT and from 32 threads it seems to fail.

This version only works in FPU and AVX 2 mode and the results are not valid.

-

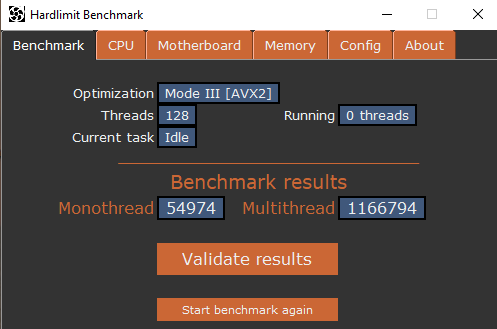

I understand that if you took the average of the threads, the result would be coherent, but there is one that is going off the rails. I'm going to try with 128 to see what happens.

-

With 128 threads I think I hold the world record in multithreading:

Well, and if I don't have it, I'll try it with 256 threads to see what happens

-

@kynes There is a clear difference here. I am also seeing it in my case. I suppose that in the end I will have to apply a kind of truncated mean: something like eliminating the two highest values, the two lowest and making an average of the remaining 6. Because it is clear that the outliers at the beginning distort the measure.

I am also going to review the synchronization mechanism, to see if the fault was there.

By the way, be careful with the 256 threads, because if the system crashes and the processes lose communication with each other, they can remain permanently waiting consuming 100% of all the cores and you will need to either close each process manually or restart the PC.

-

@kynes said in Hardlimit Test Bench:

With 128 threads I think I have the world record in multithreading:

Well, and if I don't have it, I'll test it on 256 threads to see what happens

There the synchronization has failed. Basically you are passing a handful of threads at different times and the scores are being added as if they had all been passed at once.